By: Dr Mirjana Sokolovic-Perovic, Psychology and Clinical Language Sciences, m.sokolovic@reading.ac.uk

Overview

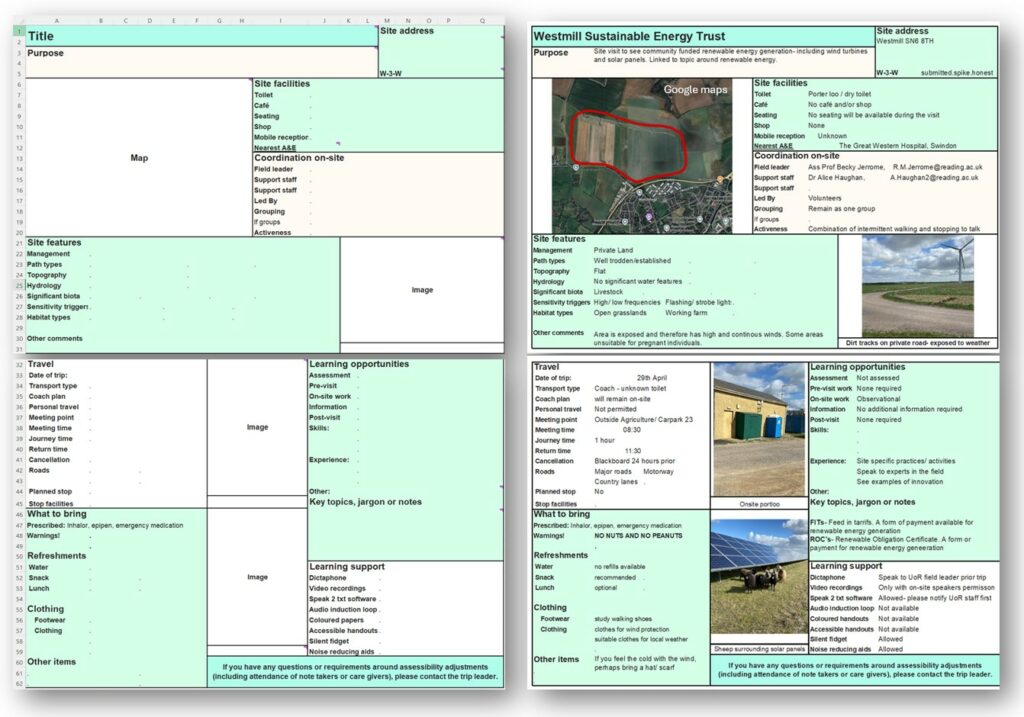

This post reports on a PLanT student-staff partnership project in which students and staff from the School of Psychology and Clinical Language Sciences worked together on exploring the experience of international speech and language therapy (SLT) students. Student partners created an International Students’ Guide providing additional support for international students when they join the programme.

Objectives

- To explore the experience of international speech and language therapy students

- To identify key areas where international students may need additional support from the School

- To create resources to support international students’ transition and to enhance their experience

Context

Student feedback has suggested that international SLT students face unique challenges that may affect their wellbeing, academic achievement and student satisfaction. SLT is an Allied Health profession, and these students, in addition to needing to adjust to a new culture and a new educational system, have an additional challenge of understanding the UK health and care system, the British National Curriculum, and speech and language therapy as a profession.

Implementation

The main aim of the project was to better understand these challenges and to create a student-led resource to support international students’ transitions. Student partners were included in planning and decision-making at all stages of the project.

In the first phase of the project, student partners led focus group discussions, which identified several areas where additional support would be beneficial.

The second phase consisted of a student-led workshop. Based on the main themes from focus group discussions, student partners designed a Guide for new international students providing detailed information about the issues identified. The focus was on supporting international students during the pre-arrival period and on joining the university. It contains practical information (arriving in the UK, transportation, banking) as well as information about the Welcome Week, about DBS (Disclosure and Barring Service) and Occupational Health checks, and some common terminology used in the higher education in the UK. The Guide was sent to all SLT international offer holders in summer 2024 and 2025.

Impact

The International Student’s Guide received positive feedback. Students reported that it helped them feel more prepared for coming to the UK and for joining the course. We will continue to update and share it with new students in the coming years.

The outcomes of this partnership have been shared within the School, University and presented at national conferences. Within the School, a summary of our findings was shared with teaching staff, which initiated a change in staff perceptions of the unique circumstances of international students, enabling them to better identify and support students who may be struggling. Further, based on the highlights from focus groups, the Admissions Team updated information we share with candidates during the admission process and all communication we send to international offer holders during the pre-arrival period. It now includes a separate section for international students and provides a checklist of actions they need to complete before joining the course, which offer holders found to be helpful.

Reflections

This has been a hugely successful project, not only because of its direct outcomes, but also because of the other initiatives that were inspired by it.

The project team thoroughly enjoyed working together. In the words of the lead student partner Marie Elena:

“It was a truly affirming experience, not only to be heard as a student but to relate with all students who participated in the project, and to create a piece that will help future international students ….”

On the other hand, because of the relatively short timeline for the project, we encountered some challenges when planning and scheduling our work. As students from all year groups and from both programmes were involved in the project, it was difficult to find times when all partners were free to meet, especially towards the end of the term.

Follow up

On the suggestion of the student partners, I introduced a welcome meeting for current and new international SLT students, to foster creation of an international student community and to help new students settle in the course. The feedback was excellent, and we plan to keep this event as part of our Welcome Week Programme.

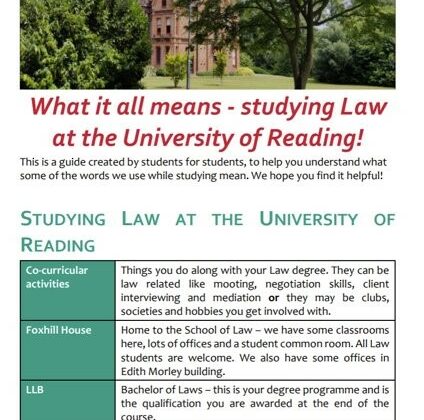

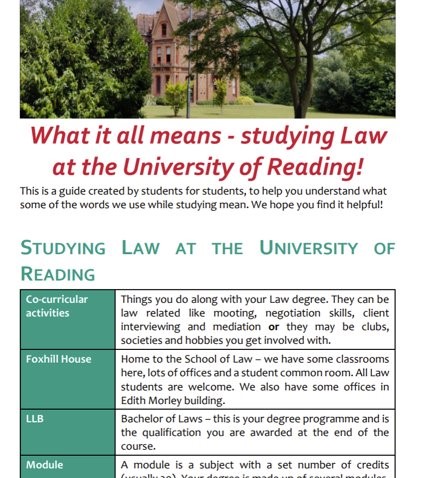

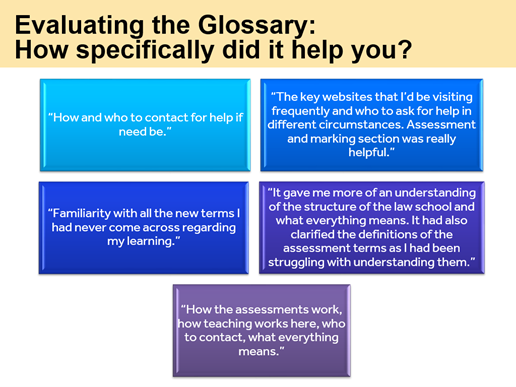

Following a suggestion from the focus groups, I led a PCLS-funded T&L partnership project to create an SLT Glossary, which was shared with first year students when they started the course.

A special thank you goes to my student partners (in alphabetical order) Ameera, Emily, Jamielyn, Jessica, Jojo, Mariana, Marie Elena, Shannon and Tegan for their time, enthusiasm and creativity when working on this project!

References

- Sokolovic-Perovic, M., & Goddard, M-E. (2024, 17 October). Across the pond: International SLT Students’ Guide. Invited talk. CQSD PLanT Showcase: Applying for Partnerships in Learning and Teaching (PLanT) project funding.

- Sokolovic-Perovic, M. (2024, 4 July). Across the pond: A PLanT Project. [Staff talk]. PCLS Away Day.

- Sokolovic-Perovic, M. (2025, May 27 & 29). Supporting international students’ transitions using student-created resources [Paper presentation]. Change Agents’ Network (CAN) Conference. University of Plymouth, UK.

- Sokolovic-Perovic, M. & Low, J. (2025, September 4-5). A student-staff partnership as a catalyst for change: Co-creating support for international students [Paper presentation]. Researching, Advancing, and Inspiring Student Engagement (RAISE) Conference. University of Glasgow, UK.