By: Katie Barfoot and Anantha Krishna Sivasubramaniam, Psychology & Clinical Language Sciences. Communicating author: katie.barfoot@reading.ac.uk

Overview

This project transformed a complex, inefficient dissertation-supervisor allocation system for Psychology students into a streamlined, transparent, and fair process. Through workflow redesign, clearer policy, improved communication, and the development of a bespoke allocation algorithm, it significantly reduced staff workload, enhanced student satisfaction, and delivered a more equitable experience for all.

Objectives

- Streamline and automate the supervisor allocation process to reduce administrative burden.

- Improve fairness, transparency, and consistency in matching students to supervisors.

- Increase the proportion of students receiving one of their preferred supervisors.

- Enhance student satisfaction and manage expectations through clearer communication and policy.

- Develop a scalable, efficient algorithm to support multi-programme allocations.

Context

The activity was undertaken to address the growing complexity of allocating up to 350 final-year Psychology students to dissertation supervisors and project partners across four programmes. Rising student numbers, varied staff capacity, and increasing NSS-driven expectations exposed inefficiencies in the manual process, prompting a need for a fairer, scalable, and more transparent allocation system.

Implementation

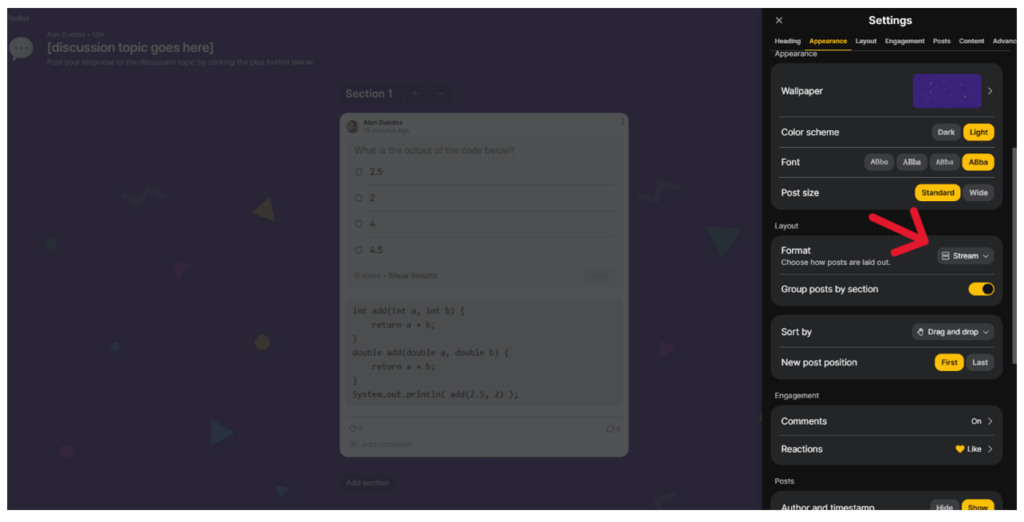

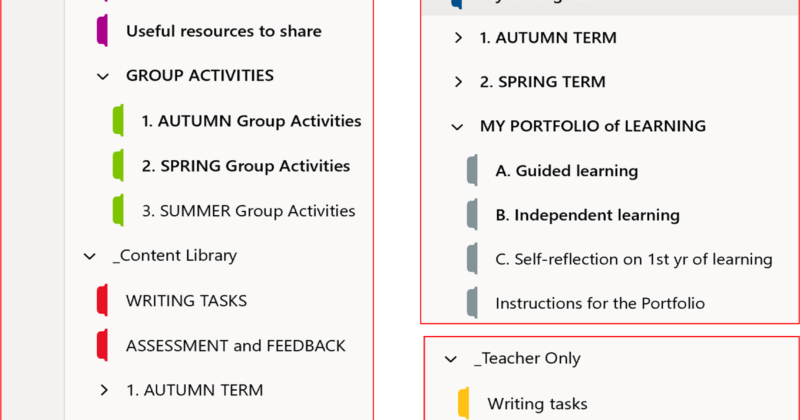

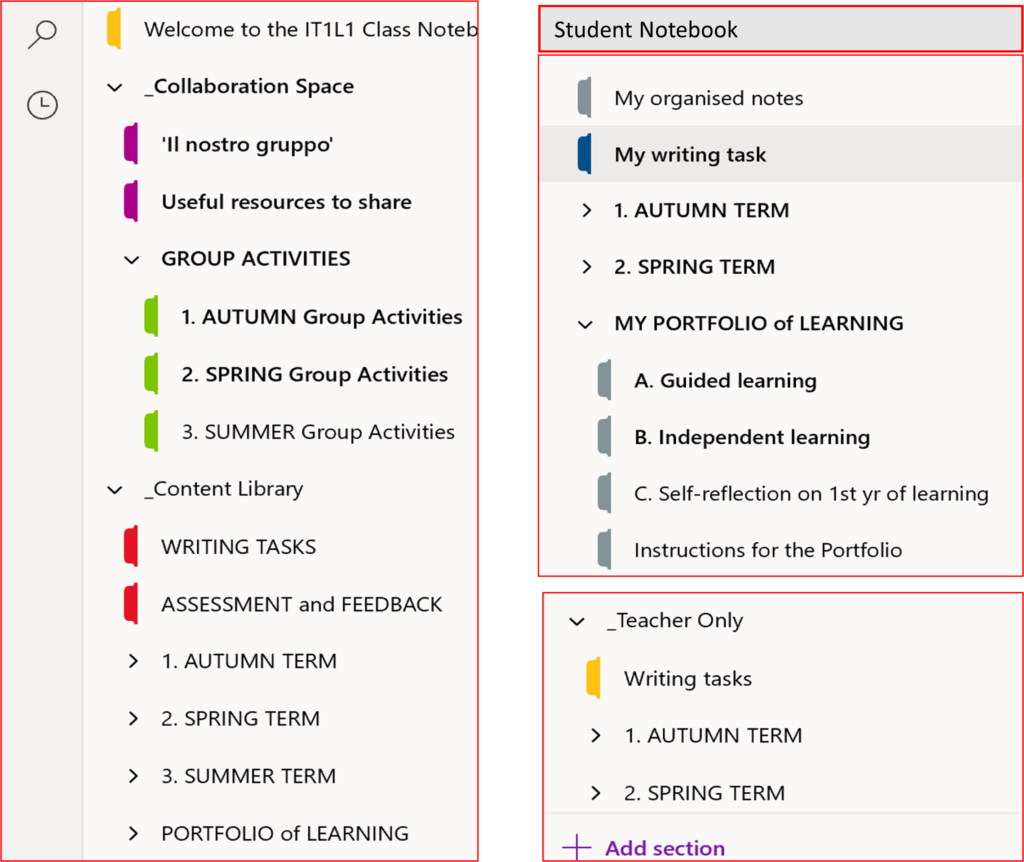

The implementation of the student-supervisor allocation process followed a stepwise approach. The first stage focused on streamlining data collection: MS Forms replaced email submissions, allowing students to submit their supervisor choices individually or with project partners. Responses were automatically exported into Excel, reducing administrative burden and minimising errors. A key improvement here was also a shift in workflow. Rather than attempting to manage every stage of the process alone, the module convenor, Katie Barfoot, collaborated with the programme administration team, who brought valuable expertise on sourcing student class lists (RISIS system) and accessing individual student information, allowing the MC to more reliably crosscheck spreadsheet data.

Initially, a published allocation algorithm was applied to match students to supervisors based on preferences and staff capacity. While this automated part of the process, Katie still had to considerably prepare input files and ‘mop up’ output files. Manual oversight was also required for partner matching and in cases of supervisor oversubscription where staff topics were more popular. An outcome of such oversubscription was that students (approximately 5-10% of the cohort) did not receive any of their preferred supervisors and were understandably dissatisfied. Student complaints and requests to change supervisor were also received from students even when receiving one of their choices, due to not getting a first-choice allocation.

We realised how important the student perception of our process was in managing expectations and subsequent (dis)satisfaction. The term ‘ranking’ created a sense of hierarchy, making students feel only a first-choice allocation was successful. To shift expectations, we replaced rankings with ‘preferences’ and asked students to identify six supervisors they would be happy to work with out of a possible thirty, which framed receiving any of their six choices as a positive outcome. A transparent policy was also introduced for requesting supervisor changes. These collective changes were communicated in the project handbook and briefings, and were key to managing student expectations of the process and, in turn, reducing student dissatisfaction. It standardised the process, reduced ad hoc requests, and helped to rebuild student trust.

Although the system was improved, clear challenges remained: the algorithm needed to work across multiple degree programmes with overlapping supervisors – a part that was still being managed manually. Fairness needed to be prioritised within the algorithm over strict optimality, and the number of students missing out on their preferred supervisors needed to be minimised further. Katie reached out to Psychology Research Technician, Anantha Krishna Sivasubramaniam, in the hope that a collaboration could produce a bespoke, future-proofed system that met these objectives.

Anand firstly developed a Jisc Online Survey to collect student responses where the output better integrated with the coding platform, R. Within R, he then developed a single algorithm that sequentially handled all student cohorts (across BSc Psychology, BSc Psychology with Neuroscience, BA Art and Psychology and MSci Clinical Psychology) while automatically updating supervisor capacities. Fairness was built in by randomising the order of students and their preferred supervisors at each allocation step. Students were initially assigned using a ‘greedy fill’ approach: a random student was paired with a random supervisor from their preference list who still had capacity. Any remaining unallocated students were then accommodated in a clean-up step, moving allocated students to another preferred supervisor with capacity to free up space.

Impact

The project successfully achieved its aims of creating a fairer, more efficient, and future-proof supervisor allocation process. Automation significantly reduced the workload for the module convenor and administration team, while the bespoke algorithm enabled 278 students to receive one of their six preferred supervisors (2025/26) – an outcome not previously attainable. Only 45 students who did not submit preferences remained for manual allocation.

Clearer communication and the shift in language from “rankings” to “preferences” helped rebuild student trust and significantly reduced the volume of supervisor change requests compared to previous years highlighting the importance of language within staff-student communications.

Staff confidence also increased due to the introduction of a transparent policy. An unexpected benefit was the smoother collaboration between academic, administrative, and technical teams, demonstrating the value of cross-departmental problem-solving.

Overall, the system reduced manual workload, ensured equitable allocations, and provided a foundation for future improvements – such as fully automating assignments for remaining students – making the process more robust and sustainable for years to come.

Reflections

Several factors contributed to the success of this activity. Early recognition that the existing system was no longer fit for purpose created momentum for change, while close collaboration between the module convenor, programme administration, and the Psychology Research Tech team ensured the solution addressed both pedagogic and logistical needs. Clear communication with students – particularly the shift in language from “rankings” to “preferences” and the introduction of a transparent policy – were essential in managing expectations and rebuilding trust. The bespoke algorithm was a major step forward, demonstrating the value of investing time in tailored technological solutions.

However, implementation could have been strengthened further. Automating the allocation of students who did not submit preferences remains an area for development, as manual assignment persists for this group. More robust integration with existing university systems, such as RISIS, could also reduce reliance on spreadsheet processing. Further, earlier testing with historical data may have helped identify edge cases sooner. Despite these areas for improvement, the project represents a significant and positive transformation.

Follow up

The new algorithm and revised policy have improved fairness, efficiency, and student satisfaction, with fewer change requests. A successful handover to the incoming module convenor has been completed, ensuring the process is transparent, robust, and future-proofed, with ongoing plans to automate remaining allocations and enhance partner matching.

Further information

The R code for our bespoke algorithm is available on request from a.k.sivasubramaniam@reading.ac.uk.